AI for CX Is Not the Same as AI for Repetitive Tasks: A Gartner Prediction Every CX Leader Should Rethink

I’ve spent my career working across customer experience, automation, and large‑scale testing, and one thing I keep coming back to is this: AI that performs simple, repetitive work is becoming cheaper and more accessible, but AI that touches real customers at scale will never be easy or low risk.

Gartner’s recent prediction that GenAI cost per resolution in customer service will exceed 3 dollars by 2030, higher than many offshore agents, reinforces this reality. The real question is no longer “Can AI replace humans at a lower unit cost?” but “Do we trust autonomous systems enough to let them act on behalf of our brand and our customers, at scale, in the places that matter most?”

AI that operates autonomously inside real customer journeys – call it agentic CX – is different. It’s capable of complex decision-making, thereby introducing a new class of operational and governance risk.

Worse, traditional CX assurance tools weren’t built to manage this level of AI autonomy. They’ll tell you systems and applications are operational, end of story. What they’re not telling you is where AI can silently fail inside a customer journey, with incorrect handoffs, hallucinations, and other unwanted behaviors. That’s the AI assurance gap.

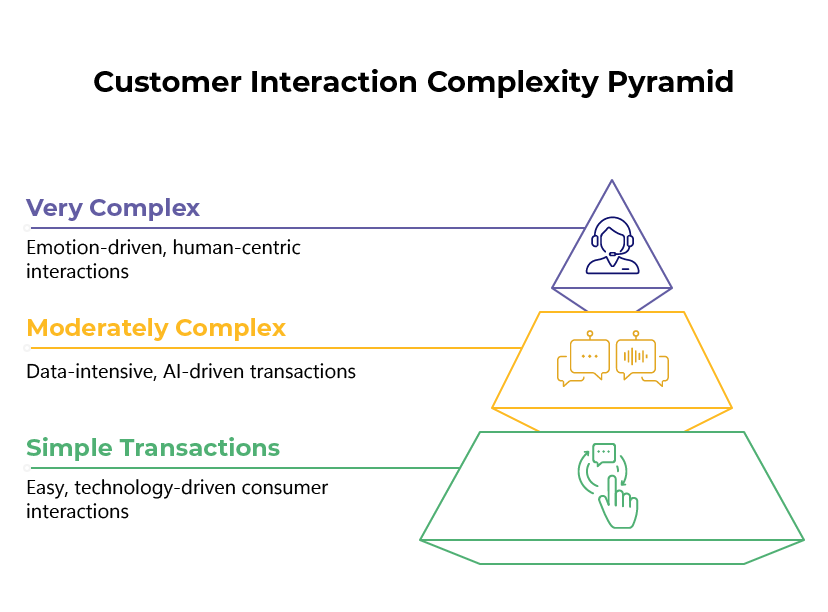

Navigating Complexity with the CX Interactions Pyramid

When I advise enterprises, I ask them to picture their customer interactions as a three‑tier pyramid.

Bottom tier: High‑volume, low‑complexity tasks such as balance checks or simple FAQs that customers willingly handle through IVRs and basic self‑service. Traditional automation is proven here, and the economics already work

Middle tier: Moderately complex journeys that start as simple requests but quickly branch into multiple lookups, decisions, and context‑dependent paths. In my view, this is the sweet spot for AI, because large models and orchestration frameworks can synthesize data and options faster than human agents, yet each error carries meaningful cost and risk.

Top tier: High‑stakes, emotionally charged situations where history, context, and relationship matter, and where customers still expect human empathy and judgment. This is where your best agents, and your highest cost per interaction, live today.

Gartner’s cost projection is largely about the middle tier where AI starts to behave like an “always on” agent. That is where token‑intensive architectures, orchestration layers, and complex, real‑world use cases drive up infrastructure and vendor costs, even as enterprises push more work into AI‑led channels.

It’s also where failure has the greatest impact. Missed resolutions at scale can erase the perceived savings and damage your brand faster than almost any other part of the stack.

Why GenAI Costs Rise as You Move up the Pyramid

On paper, using AI to perform repetitive tasks is compelling because those tasks are narrow, predictable, and can often be handled with lighter models and deterministic logic. In contrast, the cost profile of AI for CX changes as you move into the middle of the pyramid and ask models to handle open‑ended journeys that look more like human agent work.

From what I see in the market, several forces are driving GenAI resolution costs upward in that middle tier:

Significant data center and infrastructure investment to serve large models at production scale and latency.

Use of orchestration patterns, including Model Context Protocol and agent frameworks, that increase context windows, tool calls, and token usage per interaction.

Vendors moving from growth subsidies to sustainable pricing that reflects real compute and platform costs.

Complex real‑world scenarios that require richer prompts, multiple back‑end integrations, and expert design, testing, and maintenance.

This is why I tell executives to be very careful about assuming that AI will automatically undercut offshore agents on a simple cost‑per‑resolution basis. The economics are more nuanced once you include the full stack and the cost of getting it wrong.

The Middle Tier is Where the Real Risk Lives

If you position AI in the middle third of your interaction pyramid, you are effectively asking models to take on the work that drives a significant share of CX outcomes and revenue. When those interactions are mis‑resolved, the impact shows up as higher repeat contact rates, increased agent handle time to unwind failures, customer churn, and in some cases regulatory or contractual exposure.

In practice, the failure modes I worry about most in this middle tier are:

Hallucinated or outdated answers about products, pricing, or policy.

Broken flows where customers cannot escape an AI loop or reach a human when needed.

Policy, safety, or compliance violations in regulated lines of business.

Inconsistent outcomes across segments, channels, or languages that are hard to detect without systematic testing.

Gartner’s 3‑dollar prediction is only part of the equation. The metric I encourage leaders to focus on is cost per trusted resolution, which means you have objective evidence that AI‑handled interactions are accurate, compliant, and on brand across that middle tier.

How PumpCX Approaches AI Testing Differently

Coming from a background in large‑scale IVR and application testing, I do not see AI testing as a nice‑to‑have bolt‑on. I see it as a new kind of software factory that requires at least as much rigor as any traditional contact center platform, and often more, because model behavior is non‑deterministic and can drift over time.

We design assurance programs that treat AI‑driven CX as a continuous test and learn environment:

In pre‑production, we run large‑scale synthetic testing across IVRs, chatbots, and agent assistants, simulating thousands of journeys to find hallucinations, broken flows, and policy breaks before they reach customers.

In production, we monitor AI and human‑assisted channels with synthetic and real‑traffic signals to detect regressions, drift, and emerging failure patterns early.

For risk and audit stakeholders, we generate evidence of behavior over time so they can see exactly how AI is acting on customer journeys and whether it stays within defined guardrails.

The goal is to give leaders a clear pass/fail view on whether AI is ready to handle the next slice of the middle tier so they can scale with confidence.

What this Means for CX and Risk leaders

Viewed through a solution architecture lens, Gartner’s forecast is not just a pricing signal. It is a reminder that governance, testing, and assurance are now first‑order design constraints for AI‑driven CX. If AI resolution costs converge with or exceed offshore labor, then the winning strategies will be those that prioritize value, trust, and control rather than theoretical labor arbitrage.

If I were sitting on your steering committee, I would push for three concrete actions:

Make your interaction pyramid explicit and decide which tiers will be automated, augmented, or reserved for human agents, based on risk and value.

Treat AI agents in the middle tier as business‑critical applications that must pass continuous testing and monitoring before and after any change.

Put a vendor‑agnostic assurance layer in place so you can validate AI behavior across platforms and models, independent of any one technology stack.

That’s the only sustainable way I see to reconcile rising GenAI costs with the need to protect your brand and still capture the upside of AI‑driven CX innovation.

As more CX becomes agentic, the real metric won’t be cost per resolution, it will be cost per trusted resolution. And to guarantee that outcome, your business won’t be able to rely on testing alone. You’ll need continuous, end-to-end agentic CX assurance.